In a bid to clamp down on spam and abuse, Periscope announced today a brand new comment moderation system that will be crowdsourced by users in real-time.

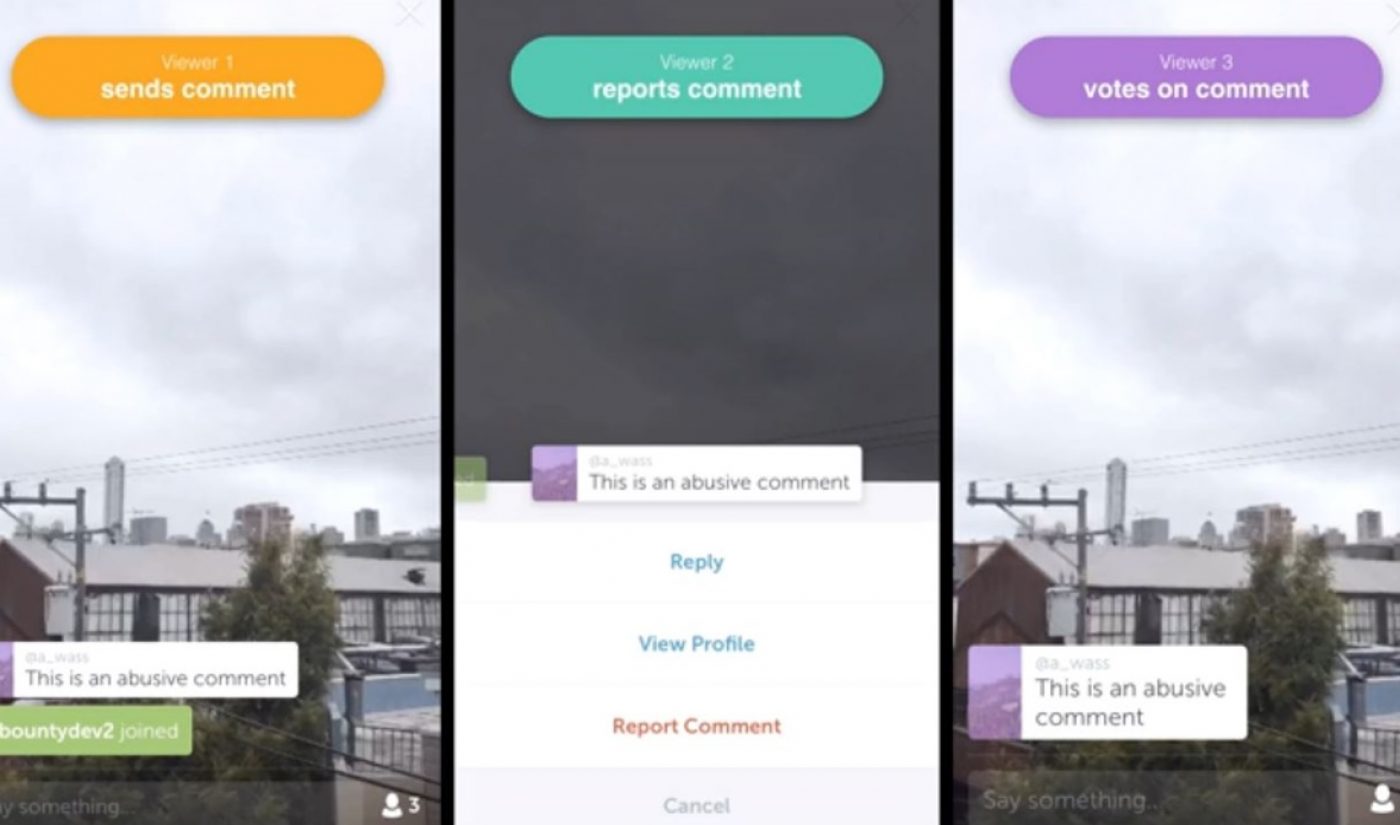

Beginning today, viewers who report an inappropriate comment will no longer see any messages from that commenter for the remainder of a broadcast, Periscope explained in a company blog post. At the same time, after a comment is reported, several randomly selected viewers will be asked to vote on whether the comment in question “is spam, abuse, or looks okay.” All voters will then see the results, and, if a comment has been deemed abusive or spam by a majority, the commenter will be temporarily unable to chat. If repeated offenses occur, that user will lose the ability to chat for the rest of the broadcast.

Asking users to vote in real-time helps take context into account, Periscope explains. “For example, a comment that might be okay in a comedy broadcast might not be okay somewhere else.”

Subscribe for daily Tubefilter Top Stories

Intricate though this process may sound, Periscope insists it is “very lightweight” and “should last just a matter of seconds.” It is also optional. Broadcasters can choose not to have their streams moderated, and viewers can opt to never be selected to vote within their app Settings.

The new system will complement other tools that Periscope has put in place to keep its community safe. Users can report ongoing harassment or abuse directly to the Periscope team, who will then make a decision about the offending content or account. Additionally, broadcasters can block and remove users from their streams, and commenters can select who is able to view their comments.