TikTok wants to give users a bit more clarity about why their videos have been removed from the platform due to Community Guidelines violations.

For the past few months, the platform said it has been testing a new notification system that explains its enforcement actions and reminds users of the specific policies that they’ve violated — rather than previously telling them that a blanket violation has occurred. The results of the test have been promising, TikTok says, with visits to its Community Guidelines page tripling and a 14% reduction in appeal requests, which users can lodge if they believe that the takedown was tendered in error.

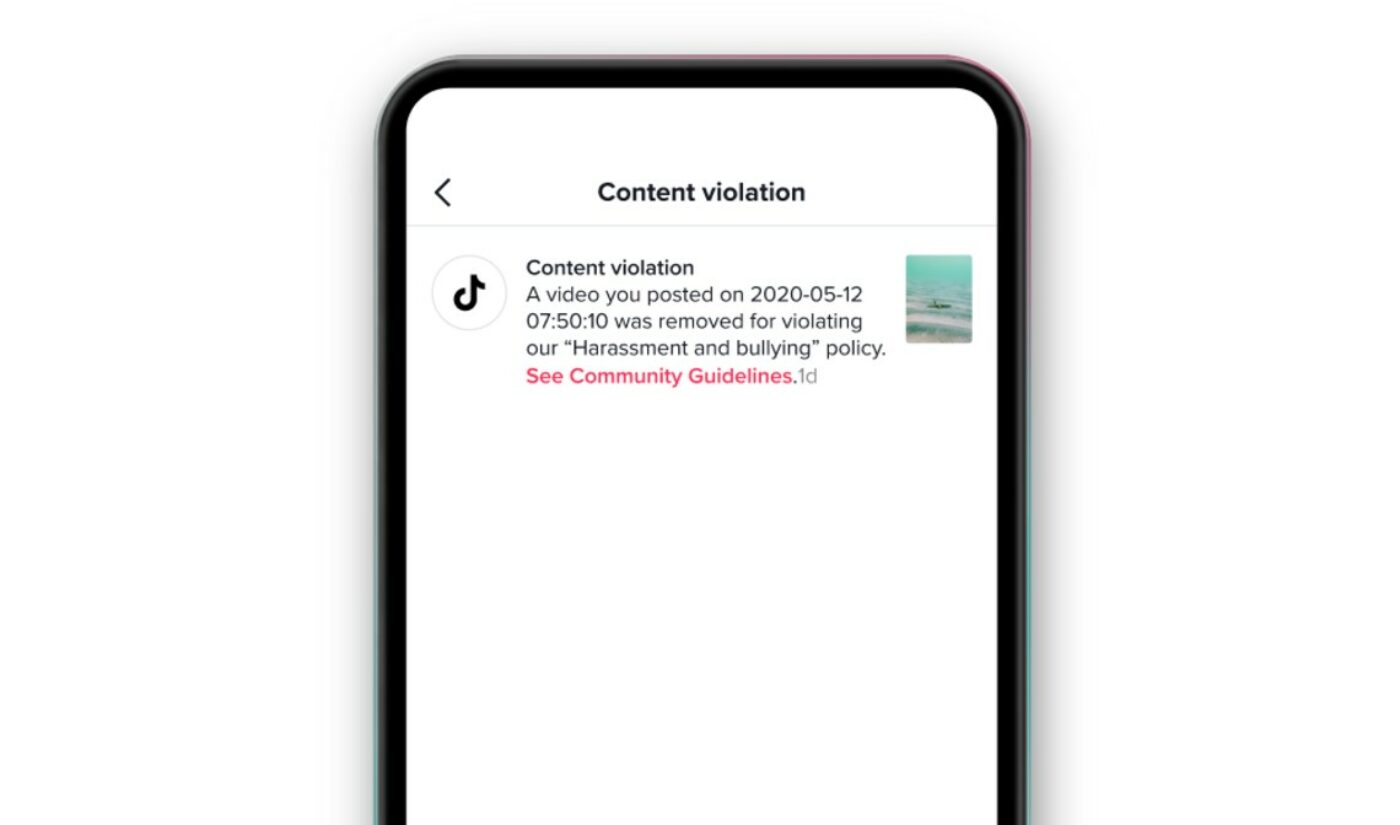

Today, TikTok has today rolled out the new notification system globally. Along with the name of the policy that the user violated, and a link to its Guidelines — pictured above — TikTok will also provide users information about submitting an appeal.

Subscribe for daily Tubefilter Top Stories

And in cases where videos are flagged for self-harm or suicide-related content, TikTok says it will now provide users with expert resources through a second notification as a means of support. That said, these resources appear to be a bit vague, including suggesting that users seek professional help via local law enforcement or a suicide hotline, reach out to friends and family, or take a break from whatever is causing them undue stress.

“Being transparent with our community is key to continuing to earn and maintain trust,” the company said of the rollout. “We’re glad to be able to bring this new notification system to all our users, and we’ll keep working to improve the ways we help our community understand our policies as we continue to build a safe and supportive platform.”