Former YouTube engineer Guillaume Chaslot is praising his one-time employer’s decision to stop recommending conspiracy theory videos.

“YouTube’s announcement is a great victory which will save thousands,” he tweeted as part of a lengthy thread. “It’s only the beginning of a more humane technology. Technology that empowers all of us, instead of deceiving the most vulnerable.”

Chaslot, who helped build YouTube’s recommendation algorithm before leaving Google in 2013, has previously addressed the long-running problem of conspiracy theories propagating on YouTube. He has a website devoted to the topic that aims to “inform citizens on the mechanisms behind the algorithms that determine and shape our access to information.”

Subscribe for daily Tubefilter Top Stories

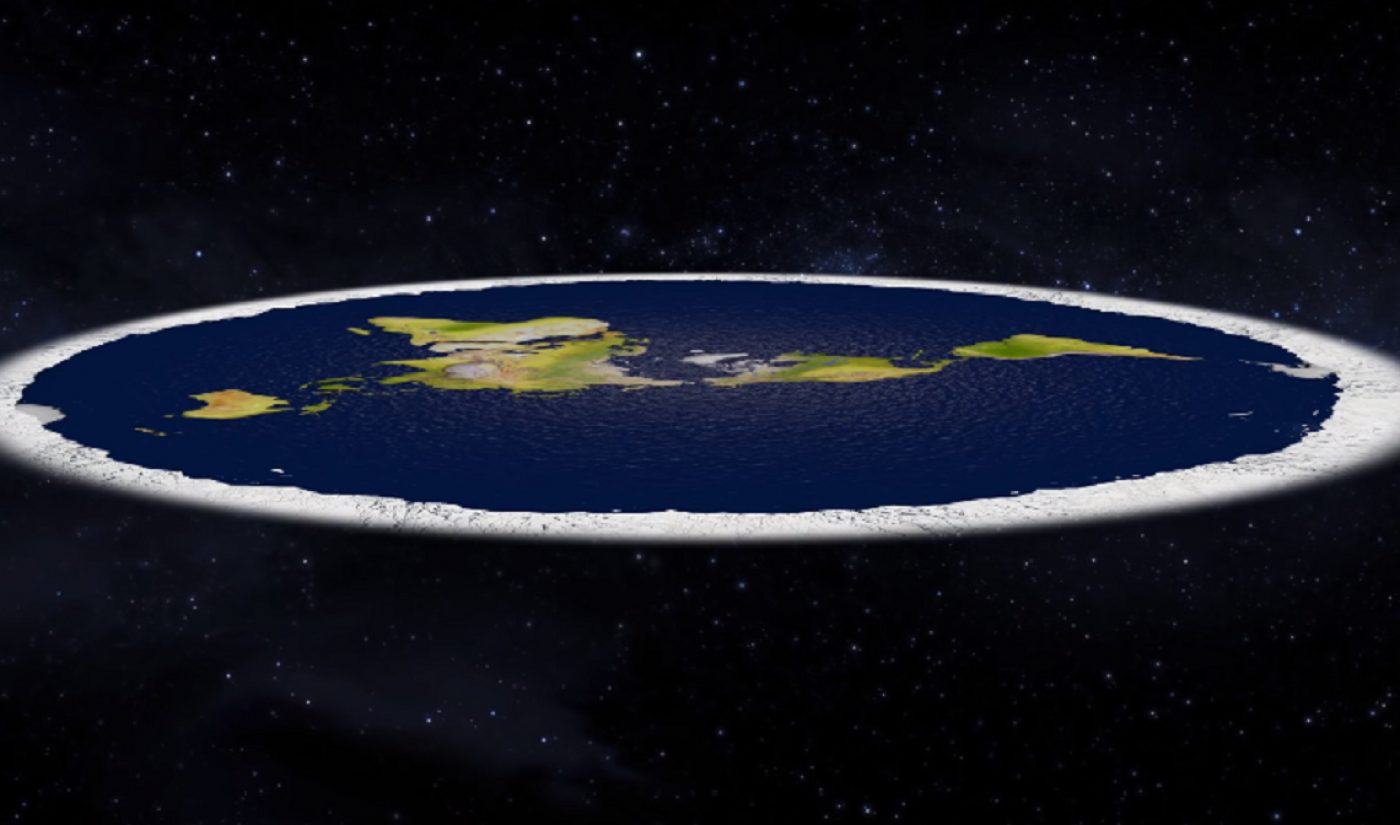

Most recently, Chaslot spoke up after a ‘flat earth’ convention in November, where a number of attendees asserted they’d been brought into the theory’s fold because of YouTube videos on the subject. (They’re far from the first to say YouTube led them to the theory — notably, NBA player Kyrie Irving became a vocal supporter of flat earth after being turned on to the theory by YouTube and Instagram videos.) Chaslot called those people “the canaries in the coal mine,” signaling the danger of YouTube’s algorithm.

Late last month, YouTube revealed its plan to mitigate that danger: a new algorithm. Not one to replace its longtime recommendation algorithm, but one to find and flag conspiracy theory videos, then block the recommendation algorithm from suggesting users watch them.

In his thread about the decision, Chaslot reiterated exactly why YouTube’s recommendation algorithm tends to glom on to conspiracy videos.

Brian is my best friend’s in-law. After his dad died in a motorcycle accident, he became depressed. He fell down the rabbit hole of YouTube conspiracy theories, with flat earth, aliens & co. Now he does not trust anyone. He stopped working, seeing friends, and wanting kids. 2/

— Guillaume Chaslot (@gchaslot) February 9, 2019

Brian spends most of his time watching YouTube, supported by his wife.

For his parents, family and friends, his story is heartbreaking.

But from the point of view of YouTube’s AI, he’s a jackpot.3/

— Guillaume Chaslot (@gchaslot) February 9, 2019

We designed YT’s AI to increase the time people spend online, because it leads to more ads. The AI considers Brian as a model that *should be reproduced*. It takes note of every single video he watches & uses that signal to recommend it to more people 4/https://t.co/xFVHkVnNLy

— Guillaume Chaslot (@gchaslot) February 9, 2019

How many people like Brian are allured down such rabbit holes everyday day?

By design, the AI will try to get as many as possible.

5/

— Guillaume Chaslot (@gchaslot) February 9, 2019

Ultimately, Chaslot says the new algorithm is a “historic victory.”

“This AI change will save thousands from falling into such rabbit holes,” he wrote.