YouTube boasted yesterday that in last year’s fourth quarter, it deleted 8.3 million videos that violated its Community Guidelines.

The announcement is part of a new quarterly report that YouTube will share in an effort to be more transparent, the company explained in a blog post — though it arrives amid a fresh wave of outrage after CNN reported last week that ads for more than 300 brands and government agencies ran against videos touting hate speech, pedophilia, conspiracy theories, and propaganda.

In addition to its first-ever YouTube Community Guidelines Enforcement Report — which by year’s end will come to include additional data about comments, speed of removal, and removal reasons — YouTube is also enabling creators to see the status of any of their own flagged videos in a new Reporting History dashboard.

Subscribe for daily Tubefilter Top Stories

In the Enforcement Report, YouTube says that machines are its most impactful tool in policing content — with respect to both relatively low-volume content categories, like violent extremism, as well as in more high-volume areas, such as spam. From last October to December, the company deleted a total of 8.3 million videos — which it notes represented “a fraction of a percent of YouTube’s total views during this time period.” Of those 8.3 million videos, 6.7 million videos were flagged by machines, 1.1 million were flagged by so-called Trusted Flaggers, 400,000 were flagged by individual YouTube users, 64,000 were flagged by NGOs, and 73 were flagged by governmental agencies.

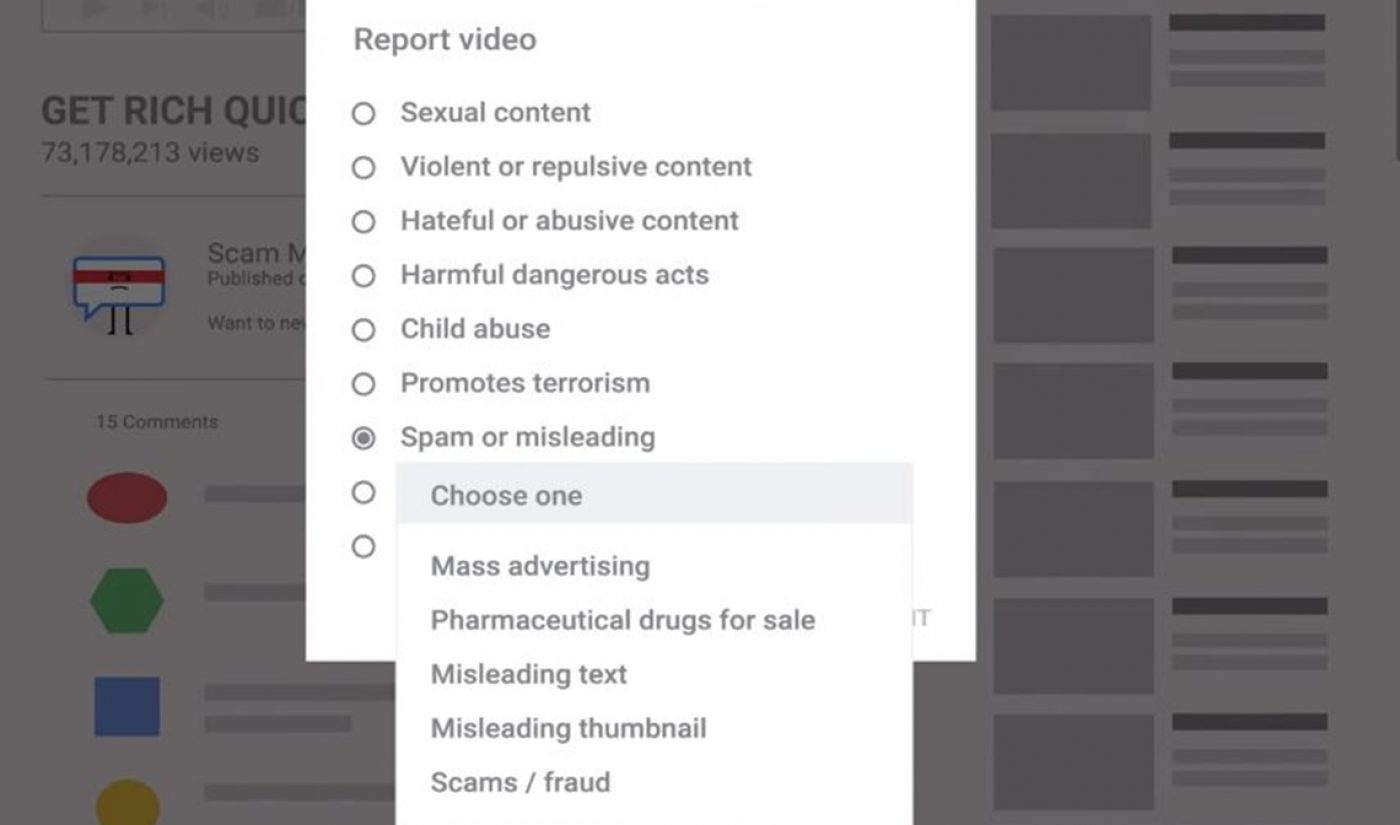

Of the 6.7 million videos that were flagged by YouTube’s machines, 76% were removed before they received a single view. And as for human flags, YouTube says that a total of 9.3 million videos were flagged by individual users last quarter — with India accounting for the majority, followed by the U.S. and Brazil. Sexual videos were the most commonly flagged form of content (30%), according to YouTube, followed by spam (26%) hateful or abusive speech (16%), and violent or repulsive scenes (14%).

As for Google’s stated vow to bring 10,000 people onboard by year’s end to combat objectionable content, YouTube says that it has “staffed the majority of additional roles needed to reach our contribution to meeting that goal.” The company has also hired full-time experts in violent extremism, counterterrorism, and human rights to help it combat the spread of objectionable content, and currently works with a network of over 150 academics, government partners, and NGOs.

You can check out a video detailing the flagging and removal process as it stands above.